Tech giant, Apple has released several new accessibility features for iPhone, Apple Watch, and Mac, comprising a universal live captioning tool, enhanced visual and auditory detection modes, and iOS access to WatchOS apps. The new capabilities in accessibility features will arrive “later this year” as updates roll out to various platforms.

The New Accessibility Features Will Include Real-Time Captions on Videos

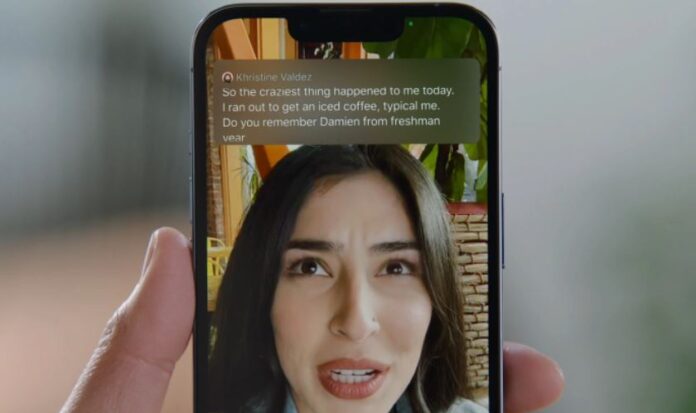

Moreover, the most widely useful tool is probably live captioning, already very popular with tools like Ava, which raised $10 million the other day to develop its repertoire. Apple’s tool will serve a similar function in its accessibility features to Ava’s, basically allowing any spoken content a user encounters to be captioned in real-time, from videos and podcasts to FaceTime and other calls.

Apple’s FaceTime in particular will gain a special interface from the new features with a speaker-specific scrolling transcript above the video windows. The captions can be activated via the standard accessibility settings, and quickly turned on and off or the pane in which they appear expanded or contracted. Furthermore, Apple Watch apps will get improved accessibility features on two fronts. First, there are some added hand motions for people, for instance, amputees, who have trouble with the finer interactions on the tiny screen of the device.

WatchOS Apps Can Now be Mirrored to the Screens of iPhones

A series of new actions are available via gestures like a “double pinch,” allowing you to pause a workout, take a photo, answer a phone call, and so on. Second, WatchOS apps can now be mirrored to the screens of iPhones, where other accessibility features can be used. This will also be useful for anyone who likes the smartwatch-specific use cases of the Apple Watch but has a problem interacting with the device on its own terms.

Read more: Apple Announces Several New Features for its Apple Podcasts Service

Source: Apple