Apple has delayed the rollout of the child safety features after facing a backlash due to privacy concerns. Last week, Apple announced a child safety feature to curb child sexual abuse.

Updates of Apple Child Safety Features

Apple announced three child safety features to protect children from predators who use communication tools to recruit and exploit them.

– The first feature impacts Apple’s Search app and Siri. If a user searches for topics related to child sexual abuse, Apple will direct them to resources for reporting it or it will explain to users that interest in this topic is harmful and problematic, and provide resources from partners to get help with this issue.

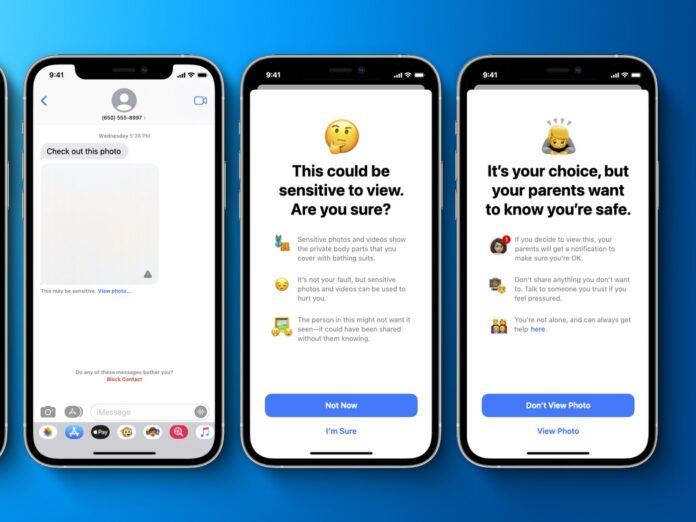

– The second feature add a parental control feature to messages, preventing the sending and receiving of sexually explicit pictures for users under 18. It will also send parents an alert if a child 12 or under views or sends these pictures.

– The third feature scans iCloud Photos images to find child sexual abuse material. This will enable Apple to report these incidents to the National Center for Missing and Exploited Children (NCMEC). NCMEC acts as a comprehensive reporting center for CSAM and works in collaboration with law enforcement agencies across the United States.

Read more: Apple’s Latest System to Scan iPhones for Child Abuse Images

Apple delays the rollout

Privacy advocates such as the Electronic Frontier Foundation notified that the technology could be used to track things other than child pornography, opening the door to certain other abuses.

Due to the backlash, the company said it will pause testing the tool to gather more feedback and make improvements. Many child safety and security experts lauded the intention behind the plan, but they also said the efforts presents potential privacy concerns.

The company released a statement saying; “Based on feedback from customers, advocacy groups, researchers and others, we have decided to take additional time over the coming months to collect input and make improvements before releasing these critically important child safety features.”